Okay, so… I just deployed my full-stack app to production.

I thought it would take 30 minutes.

It took 2 hours. Two. Hours.

So here’s the deal:

I’m going to walk you through exactly what happened.

The good parts, the frustrating parts, and—most importantly—the solutions that actually worked.

Let’s go.

The Stack

Before I dump all my problems on you, here’s what I’m working with:

- Backend: Node.js + Express

- Frontend: React + Vite

- Database: MongoDB

- Cache: Redis

- Server: Ubuntu VPS with 3.8GB RAM (budget-friendly, but… I’ll get to that)

- Containerization: Docker Compose

- Web Server: Nginx

- SSL: Let’s Encrypt via Certbot

Alright, let’s dive into the chaos.

Step 1: Server Setup (This Part Was Actually Fine)

Creating a Non-Root User

First things first—I’m not running everything as root. That’s just asking for trouble.

# SSH into your VPS

ssh root@your-vps-ip

# Create deploy user

adduser deploy

# Giving the deploy user admin privileges.

usermod -aG sudo deploy

# Switch into the new user’s account

su - deployEasy enough.

This part?

Smooth sailing. No complaints.

Installing Everything

sudo apt update && sudo apt upgrade -y

sudo apt install -y curl git ufw fail2ban nginxThis installs these tools on your server:

- curl → tool for making HTTP requests.

- git → version control (clone your repo, etc).

- ufw → firewall for controlling what ports are open.

- fail2ban → security tool that bans IPs after repeated attacks.

- nginx → web server that will serve your app.

-y means “don’t ask me questions, just install everything.”

Done. Moving on.

Firewall Setup

sudo ufw allow OpenSSH

sudo ufw allow 'Nginx Full' # Ports 80 & 443

sudo ufw enableIf you don’t allow OpenSSH before enabling UFW (Uncomplicated Firewall), you can lock yourself out of the server.

That’s why I had to run sudo ufw allow OpenSSH first.

Done.

On to the fun stuff.

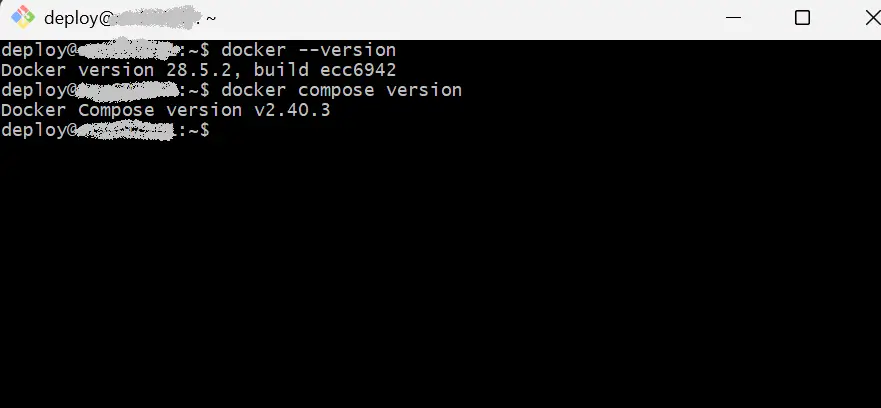

Step 2: Docker (Where Things Got Interesting)

I’ve worked with Docker before, but production deployment has its own set of challenges.

The networking nuances, service discovery, and container orchestration—each environment teaches you something new.

# Install Docker

curl -fsSL https://get.docker.com | sh

sudo usermod -aG docker $USER

# Log out and back in (this is important!)

exit

ssh deploy@your-vps-ip

# Install Docker Compose plugin

sudo apt install -y docker-compose-plugin

# Verify it worked

docker --version

docker compose version

Cool, Docker is installed.

Now the real fun begins.

Step 3: Backend Deployment (Where Everything Went Wrong)

Cloning the Repo

cd ~

mkdir -p apps && cd apps

git clone https://github.com/youruser/your-backend.git backend

cd backendNew to Linux commands?

If you’re not familiar with commands like cd, mkdir, ls, or navigating the Linux file system, check out this comprehensive Linux file system commands guide —it’ll help you feel more comfortable working in the terminal.

Smooth so far.

But as any experienced developer knows, deployment always has surprises.

Setting Up Environment Variables

I copied my .env.example to .env.production and started filling it out.

Here’s the critical configuration that makes or breaks your deployment:

NODE_ENV=production

PORT=

MONGO_URI=

REDIS_HOST=

REDIS_PORT=

REDIS_URL=

LOG_TO_FILE=trueKey thing to remember: Inside Docker containers, localhost doesn’t mean what you think it means. Each container has its own network namespace. So instead of localhost, you use the service name from your docker-compose.yml. In this case, redis and mongo.

The Dockerfile

Create a Dockerfile (if it doesn’t already exist):

vim Dockerfile

# or use nano if you prefer

nano DockerfileQuick tip: If you’re new to vim (or if you’ve ever gotten stuck trying to exit it, we’ve all been there), I’ve got a step-by-step vim guide that covers the basics, including how to save and exit without losing your mind.

Paste:

FROM node:20-alpine

WORKDIR /app

COPY package*.json ./

RUN npm ci

COPY . .

ENV NODE_ENV=production

EXPOSE 3000

CMD ["npm", "run", "start"]Save.

Pretty standard. Nothing fancy here.

docker-compose.yml (The Configuration That Tested My Patience)

vim docker-compose.yml

# or

nano docker-compose.ymlTweak names/ports as needed:

services:

api:

build: .

container_name: backend-api

restart: unless-stopped

ports:

- "127.0.0.1:3000:3000" # Only bind to localhost (security!)

env_file:

- .env.production

depends_on:

- mongo

- redis

volumes:

- ./logs:/app/logs

mongo:

image: mongo:6

restart: unless-stopped

volumes:

- mongo_data:/data/db

environment:

- MONGO_INITDB_DATABASE=myDB

redis:

image: redis:7-alpine

restart: unless-stopped

volumes:

mongo_data:This keeps the API bound only to localhost; Nginx will proxy to it.

Looks simple, right?

Hah. Just wait.

Building and Running

docker compose build

docker compose up -d

docker compose logs -f apiI ran this.

Everything started.

The containers were up.

But when I checked the health endpoint,

curl -s http://127.0.0.1:3000/healthzI saw this:

{

"status": "degraded",

"mongo": 1,

"redis": "disconnected",

"timestamp": "2025-11-10T16:38:30.601Z"

}(Note: Timestamp shown for context)

MongoDB was connected, but Redis showed as “disconnected.”

The Problems (And How I Solved Them)

Problem #1: Redis Connection Refused (The Redis v4 Configuration Issue)

The health check revealed the issue, but the real problem was in the logs:

ECONNREFUSED ::1:6379Over and over.

Every few seconds.

My logs were just… spam.

My Debugging Process:

I started with the standard troubleshooting checklist:

- Verified Redis container was running and healthy

- Checked network connectivity between containers

- Confirmed environment variables were being passed correctly

- Reviewed Redis client configuration against the latest documentation

The error pointed to a connection issue, but the containers were running fine. That’s when I realized the problem wasn’t with the infrastructure—it was with how the Redis client was being configured.

The Root Cause:

Redis v4 changed how you configure the client.

The old way with host and port options?

Those are ignored now.

The client just… doesn’t use them.

No error, no warning. It just silently fails.

The Fix (That Actually Worked):

// ❌ This doesn't work in Redis v4 (but no error tells you that)

createClient({

host: 'localhost',

port: 6379

})

// ✅ This is what you need

const redisUrl = process.env.REDIS_URL || `redis://${process.env.REDIS_HOST || 'redis'}:${process.env.REDIS_PORT || 6379}`;

this.client = createClient({

url: redisUrl,

socket: {

reconnectStrategy: (retries) => Math.min(retries * 100, 3000),

},

});Once I identified the root cause, the fix was straightforward.

I updated the code to use Redis v4’s URL-based configuration, rebuilt the container, and it worked immediately.

curl -s http://127.0.0.1:3000/healthz{

"status": "ok",

"mongo": 1,

"redis": "connected",

"timestamp": "2025-11-10T17:01:27.727Z"

}(Note: Timestamp shown for context)

Awesome!

Backend container is now up and running successfully

The solution itself is simple—the challenge was identifying the root cause.

Redis was showing “disconnected” because inside the container it tries to connect to localhost:6379.

Within Docker, localhost points to the container itself, not the Redis service.

Problem #2: Port Already in Use (The Quick Win)

This one was straightforward to resolve.

# Find what's using port 3000

sudo lsof -i :3000I verified it was a leftover development server (not a critical service), so I terminated it:

# Kill it (only after confirming it's safe to do so)

sudo kill <PID>Best Practice: Always verify what’s running on a port before killing it.

If you’re unsure, the safer approach is to change the port mapping in docker-compose.yml instead:

ports:

- "127.0.0.1:4000:3000" # Use a different host portStep 4: Nginx Reverse Proxy (The Part That Actually Worked)

Now I need to make the backend reachable from the outside via Nginx and HTTPS.

This part?

Smooth sailing.

Nginx is amazing.

Here’s what I did:

Install and Enable Nginx

sudo apt install -y nginx

sudo systemctl enable --now nginxThen verify:

sudo systemctl status nginxExample output:

Nginx is running successfully.

Creating the Server Block

This will proxy api.mydomain.com (the backend domain) to the container on 127.0.0.1:3000.

sudo vim /etc/nginx/sites-available/backend-api.confContent:

server {

listen 80;

listen [::]:80;

server_name api.mydomain.com;

# Security headers

add_header X-Frame-Options SAMEORIGIN;

add_header X-Content-Type-Options nosniff;

add_header Referrer-Policy no-referrer;

location / {

proxy_pass http://127.0.0.1:3000;

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header X-Forwarded-Proto $scheme;

proxy_buffering off;

}

}Enabled the Site and Tested

sudo ln -s /etc/nginx/sites-available/backend-api.conf /etc/nginx/sites-enabled/

sudo nginx -t

sudo systemctl reload nginxTested it.

Worked perfectly.

Before moving to HTTPS, I confirmed the proxy was working correctly.

Everything connected smoothly.

Finally, something that just worked without any debugging!

Step 5: SSL Certificate (The Free Part)

Let’s Encrypt is amazing.

Free SSL certificates? Yes.

sudo apt install -y certbot python3-certbot-nginx

sudo certbot --nginx -d api.mydomain.comFollowed the prompts.

Certbot does all the heavy lifting.

It even updates your Nginx config automatically.

I tested it:

curl -I https://api.mydomain.com/healthzHTTP/2 200. Beautiful.

Backend is live over HTTPS at api.mydomain.com.

Here’s what I’ve accomplished:

- Docker stack is healthy (healthz shows status: “ok”)

- Redis/Mongo connected via internal services

- Nginx proxies api.mydomain.com → `127.0.0.1:3000`

- Let’s Encrypt certificate installed; HTTPS check passes

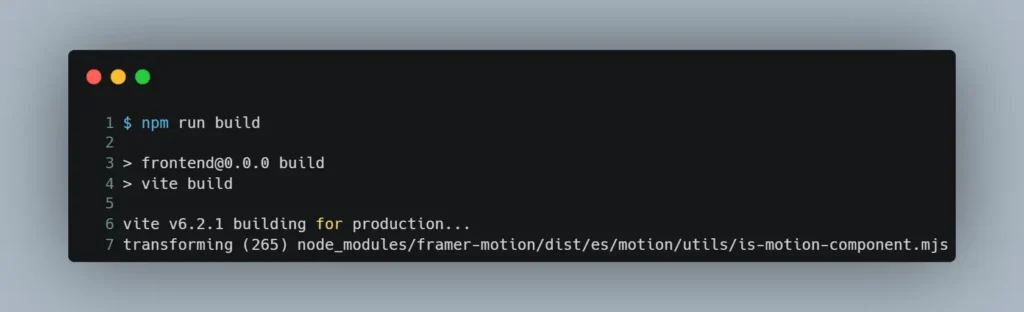

Step 6: Frontend Deployment (Where My VPS Almost Died)

Building the Frontend

I cloned the repo:

cd ~/apps

git clone https://github.com/<my-account>/<frontend-repo>.git frontend

cd frontendThen ran

npm ciand then…

npm run buildExample output:

And it hung.

Just… hung there.

For 10 minutes.

Nothing happening.

I canceled it. Tried again.

Same thing. Canceled.

Tried again. Same thing.

Problem #3: Out of Memory (The VPS Killer)

My VPS has 3.8GB of RAM. That should be enough, right?

Wrong.

The build was trying to transform framer-motion and just… ran out of memory. No error. No crash.

Just… stopped responding.

What I Did:

First, I checked the resource usage:

htopRAM was nearly maxed out, (about 3 GB used of 3.8 GB) and there’s no swap at all.

That explains why Vite keeps stalling once the build ramps up

The culprit?

My VS Code remote session was consuming massive amounts of memory—maxing out the available space on my VPS.

Killed unnecessary processes:

# VS Code servers were eating 1GB+ each

pkill -f .vscode-serverThose VS Code server processes were consuming over a gig each.

With them gone, I freed up a lot of memory.

(Yes, I got kicked out of VS Code remote, but it was worth it.)

And it worked!

Finally.

The build completed in about 3 minutes.

Problem #4: Case Sensitivity (The “Works on My Machine” Classic)

After the build finally worked, I got this error:

Could not resolve "../../components/shared/filterTabs"But it worked on my Windows machine!

What gives?

Oh right.

Linux is case-sensitive. Windows isn’t. The file was actually FilterTabs.jsx (capital F and T), but my import was lowercase.

Fixed the import, rebuilt, done. Classic.

Deploying the Static Files

sudo mkdir -p /var/www/frontend

sudo rsync -av --delete dist/ /var/www/frontend/Nginx Config for Frontend

server {

listen 80;

listen [::]:80;

server_name mydomain.com www.mydomain.com;

root /var/www/frontend;

index index.html;

# SPA fallback (important for React Router!)

location / {

try_files $uri $uri/ /index.html;

}

# Cache static assets (performance!)

location ~* \.(jpg|jpeg|png|gif|ico|css|js|svg|woff|woff2)$ {

expires 1y;

add_header Cache-Control "public, immutable";

}

}Enabled it, got SSL, done.

Tested it:

curl -I https://mydomain.comHTTP/2 200. Frontend is live.

Both backend and frontend are now running in production.

The app is live. After 2 hours of debugging, it’s finally done.

The Real Takeaways

- Docker networking is different: localhost inside a container ≠ localhost on the host. Use service names. Understanding Docker’s networking model is crucial—check the official Docker networking documentation for deeper insights.

- Memory matters: Small VPS? Monitor resource usage with htop and identify what’s consuming your memory. Sometimes it’s unexpected processes like VS Code remote sessions.

- Case sensitivity will bite you: Test builds on Linux before deploying. Windows/macOS are case-insensitive; Linux isn’t. This catches many developers off guard.

- Redis v4 changed things: URL-based config is required now. The old host/port options are ignored. The Redis Node.js client documentation has the latest configuration details.

- Read the logs: Seriously. The answers are usually there, buried in error messages. Structured logging makes this much easier.

- It’s okay to struggle: I spent 2 hours on what should have been 30 minutes. That’s normal. Deployment is hard, it just is.

Additional Resources

If you want to dive deeper into any of these topics, here are some valuable resources:

Docker Networking Documentation: Comprehensive guide to Docker’s networking model

Nginx Reverse Proxy Guide: Official Nginx proxy module documentation

Let’s Encrypt Documentation: Everything you need to know about free SSL certificates

Redis Node.js Client: Official Redis client for Node.js with migration guides

Node.js Production Best Practices: Comprehensive checklist for Node.js production deployments

Linux File System Commands Guide: If you’re new to Linux, this covers all the essential file system commands

Vim Editor Guide: Step-by-step guide to using vim (including how to exit it—because we’ve all been there)

Final Thoughts

Look, deployment is hard. It just is. But it’s also… kind of satisfying when everything finally works?

I’m writing this because I wish I had this guide when I started.

Not the perfect, sanitized version—but the real one. The one where things break and you have to figure out why.

If you’re reading this and you’re stuck, know that you’re not alone. I was stuck too. We all are, at some point.

Want More?

If enough people want it, I’m thinking of making a video walkthrough.

Less reading, more watching.

If that sounds useful, drop a comment below and let me know.

I’ll prioritize it if there’s interest.

And hey—if you’ve deployed something recently, share your war stories in the comments.

What broke?

What surprised you?

What would you do differently?

We’re all learning together.

P.S. If you found this helpful, share it with someone who’s about to deploy their first app. Save them the 4 hours I spent debugging Redis connections.